UCSD CSE190/291P SP26 Syllabus and Logistics

- Nadia Polikarpova (Instructor, CSE291P)

- Joe Gibbs Politz (Instructor, CSE190)

Generative AI and Programming introduces you to the broad field of programming with generative AI tools. Prompting AI agents to write code for you only scratches the surface! We are even more motivated by identifying new kinds of applications that would be difficult or impossible to write without generative AI as a fundamental component. We want to bring our software engineering sensibilities to bear on building useful applications atop existing AI tools.

Basics

- Lecture:

- Tue/Thu 9:30am, WLH2005

- both 190 and 291P students attend the same lectures!

- Professor and staff office hours: See the calendar below

- Q&A forum: Piazza

- Course GitHub Org

Calendar & Schedule

Detailed Schedule

| Date | Topic | Released | Due |

|---|---|---|---|

| 3/31 | Introduction + Semantic Text Processing (code, pdf) | A1: Social Media Monitor | |

| 4/2 | Semantic Text Processing (cont.) | ||

| 4/7 | Semantic Text Processing (cont.) | A1 initial submission | |

| 4/9 | A1 Review Day | A1 reviews (Fri 4/10) | |

| 4/14 | Planning and Coding Applications with an Agent | A2: Document Scanner | A1 final submission (Fri 4/17) |

| 4/16 | Mixed-media and Richer Extraction | ||

| 4/21 | User in the Loop Prompts | ||

| 4/23 | Agents and Agent APIs | A2 initial submission (Fri 4/24) | |

| 4/28 | A2 Review Day | A2 reviews (Wed 4/29) | |

| 4/30 | Reasoning about Tool Use | A3 | A2 final submission (Fri 5/1) |

| 5/5 | More on Building Agents | ||

| 5/7 | |||

| 5/12 | |||

| 5/14 | |||

| 5/19 | |||

| 5/21 | |||

| 5/26 | |||

| 5/28 | |||

| 6/2 | |||

| 6/4 |

Course Components

There are three main parts of the course that have a grade: Assignments, Peer Review and Feedback, and Lecture Participation.

Assignments and Reviews

The course has several assignments that involve programming and writing.

Each assignment will have three deadlines – an initial deadline, a deadline for reviews, and a post-review deadline. Specific assignments will have different rules for working individually or in groups.

At the initial deadline, your goal is to produce a working implementation of the project description that you can demo, and others can try and give feedback on.

Your work will then be shared, presented, or demoed (depending on the specific week), other groups will give feedback to you, and you will give feedback to other groups. This may include:

- Code feedback

- Bug reports

- Feature requests

Specific assignments will have precise rubrics for what to provide in reviews. The course staff may also provide feedback in this form.

Then, for the post-review deadline, you should respond to the feedback. Again, we will develop specific requirements for each one of the assignments, but required responses may include fixing some or all of the repored bugs, adding one or more of the requested features, and describing or updating code that people were confused about.

The course staff will combine the initial submission score and your response to the feedback into an overall 0-4 score for the assignment.

Reviews you Write

As described above, you will conduct reviews of other groups' work on a regular basis. These also form a part of your grade, and are expected to be thoughtful, thorough, and high-quality. We want them to represent your genuine human feedback on the programs you work with. Specific assignments will have specific instructions on review prompts, reflections, and feedback to give.

In general, reviewing will start in-person on “review days” (even week Thursdays), and will be finished and submitted asynchronously by Friday evening. For reviews you write, we ask that you not use generative AI to generate your reviews. To motivate this, we will deduct substantial credit, including giving 0s, if we find obvious factual errors in reviews (AI hallucinated or otherwise).

Reviewees (the students whose work is reviewed) will give a score for the usefulness and thoughtfulness of the feedback. The course staff will take this feedback-on-feedback into account when assigning a 0-4 reviewing score on each reviewing pass.

Missed and Late Work

Review day requires that you have a submission to present and that you attend in person. Review day attendance is expected — you get live back-and-forth with reviewers, and your reviewers get to interact with your system directly. We acknowledge that genuine conflicts may occur. If you miss a review day or are not prepared for it, the following policy applies:

- We will post a makeup sign-up thread so other students in the same situation can find you.

- Form a group of 3 teams (pairs if 3 teams are not available) and conduct a recorded video call following the same review script used in class. You must have your video on for the call. All teams must have a submission to present by the time of the recorded session.

- If no partner is available: sign up for a TA makeup slot (we'll hold limited slots Friday–Monday).

- Submit the recording along with your review Issues by Monday 11:59pm.

If you don't complete a makeup session by then, you receive a 0 for the review score and your assignment grade is capped at 2/4.

If one member of a team misses review day, the attending partner presents the project — your team still receives reviews as normal. The absent partner must still complete the makeup process for their reviewing obligation, and this counts as a makeup.

The first makeup carries no grade penalty. Starting with the second, your assignment grade is capped at 3/4 even if the makeup is completed on time — attending review day is an important part of the course, and a pattern of absences affects your reviewers and reviewees, not just you.

Lecture Participation

In each lecture, we'll have a paper handout for review questions. These will be collected at some point during the lecture session. You get credit for answering questions on the handout with any reasonable answer; full correctness is not required. They are not quizzes; you can talk with people around you to complete them.

Check-in Interviews

The course staff may assign a student a grade of pending instead of 0-4 on any assignment. If we do, that means we want to have a check-in interview with you about the work. In a check-in interview, we'll ask you to walk through your code and explain your design decisions, how things were generated, what your contributions were, and so on.

Grading

The two components of the course has a minimum achievement level to get an A, B, or C in the course. You must reach that achievement level in all of the categories to get an A, B, or C.

- A achievement:

- ≥85% of assignment points (e.g. likely 4 assignments at 4 points each, so 14/16 points)

- ≥85% of review and feedback points (e.g. likely 4 assignments at 4 points each, so 14/16 points)

- B achievement:

- ≥75% of assignment points

- ≥75% of review and feedback points

- C achievement:

- ≥60% of assignment points

- ≥60% of review and feedback points

Below C achievement (in any category) is an F/No Pass.

Lecture participation will be used to assign +/- modifiers to grades. If lecture participation is notably low (less than 1/2 of available classes), it drops the grade by one letter grade. The 1/2 of available classes threshold will probably be 8 (20 lectures - 4 review days = 16 lectures with participation credit), but may change slightly because the second half of the class is less firmly scheduled.

Requests to change this grading policy (for a specific student or class-wide) will be denied with a link to this syllabus section. Consider this: we may, as instructors, decide for academic reasons that the most accurate way of assigning letter grades in the class needs to change (and we tend to only make changes that improve letter grades relative to this starting policy). However, it would be inappropriate for us to do so in response to student requests: that could create an appearance that we give students the grades they ask for rather than the grades they earned.

Other Policies

Generative AI Use

You are highly encouraged to use agentic coding or other generative tools for the programming parts of this course. We ourselves find them super useful for navigating the APIs of the various backend systems we used for the assignments. Use tools that credit the AI systems you use in commit messages (Claude Code does a good job of this by default, for example). Individual assignments have instructions for sharing how you used AI for learning and accountability purposes.

Prose is more complex. It's fine to use generative AI “off to the side” to help brainstorm and draft, or to use as a proofreading tool, or for rote text in documentation. You should not use generative AI to create messages you send as part of reviews, as part of Piazza posts, in reflections like your DESIGN documents, and other human-to-human communication. This is because these kinds of documents are meant for communication between humans, so it is disengenuous to put a computer on one side, and it erodes confidence in human communication when our individual voices are flattened out into one provided by a major AI company.

In all cases, you are responsible for the content you submit and send. Generative AI cannot be held responsible for a mistake in something that is published: the human author and publisher (you) is always and solely accountable.

We will not use generative AI to write substantial communications with you, either. We do use Claude Code to help brainstorm and proofread this website, and to suggest formatting and structure, and we'll likely use it to do things like generate seating charts and other rote and mechanical parts of communication, but we confidently claim authorship of the content of these pages and our messages to you. Any mistakes are our responsibility (not Claude's) and we'd like to think that any well-written insights and well-communicated passages are ours, as well :-)

Welcome to CSE190/291P

Hi!

If you're getting this, we've approved you to enroll in CSE190/291P, Generative AI and Programming, for spring 2026. We're excited to have you!

There are a few things we want you to be aware of before you enroll:

The course is new, and experimental. The field of generative AI and programming is large and moving fast; there's no way any of us can claim to be exhaustive experts. As a result, the course is going to involve learning from each other and experimenting with new tools just as much or more than Nadia and Joe telling you how things work or what to do. So expect project work, frequent demos, and peer feedback.

We don't have any guarantee of special funding for AI models, accounts, and usage for the course. We're assuming that students will be able to sign up for (potentially paid) accounts on services like Anthropic, OpenAI, Google Gemini, and so on in order to do work in the course. We don't expect it to cost more than about $100 of usage for the whole quarter, but this is an estimate, not something we can promise. Please reach out if this is a barrier for you (in particular if you would otherwise get things like textbooks paid for through a source like a scholarship).

We don't have a specific syllabus that we are committing to yet; you have to be a bit willing to figure it out along with us starting on day 1 of spring quarter 🙂. We do have a general plan for the broad themes and format of the projects:

A big theme for us is new programs we can build that would have been difficult or impossible without Generative AI. This means this course is not focused solely on using a coding agent like Claude to write existing programs faster than we could have before, or to write large programs as solo developers rather than with a team. Rather, we are interested in what new applications are possible: large-scale text processing, working with documents like PDFs or images or videos, presenting users with an agentic interface to existing but hard-to-use tools, etc. We will think about the interface we present to end users (who may or may not be software developers), and how to make generative AI systems work for them.

A general format will be open-ended projects with a round of demos, peer review, and responses to feedback. That is, you will not be submitting projects for the course staff to peruse and grade with a precise rubric for functionality. Rather, your goals will be to present and explain the work you've done, according to an assignment topic and theme, and then give and respond to feedback from your peers about the systems you all build.

In terms of preparation, we expect that the following kinds of tasks are reasonable to you, or you are confident in figuring them out on your own (potentially with the assistance of your favorite AI agents!):

- Using API keys (e.g. managing environment variables, configuration files, and source control)

- Using git and GitHub, making pull requests, creating and replying to issues

- Writing multi-file programs from scratch, including setting up tests

- Basic command-line user interface design

- Basic understanding of web servers and web pages: e.g. a web server is a program that stores some state and replies to requests with HTML pages

Feel free to reply with questions, but we may not be able to answer them all yet! If you know that you do not want to enroll, please let us know so we can admit additional students.

Best, Joe and Nadia

Unit 0: Welcome to CSE190/291P

What is this class about?

This class is about AI-powered systems: software systems that integrate generative AI as a core component.

About 5 years ago, systems like these were either impossible or required a team of ML specialists:

- A medical records system that reads doctor's notes and extracts diagnoses

- A document scanner that looks at a photo of a receipt and itemizes expenses

- A developer tool that helps users navigate a complex API through conversation

- A language learning app that generates exercises and evaluates free-form answers

Today, you can build these by plugging an LLM into a larger software system. The LLM handles the parts that require understanding or generation; the rest is regular software engineering.

This is not a class about using ChatGPT to write code faster. It's about building new kinds of programs that weren't possible before — and we (Joe and Nadia) have been having a lot of fun building them. This class is about sharing that experience with you.

Class philosophy

We don't claim to be experts — this field is evolving fast and we're learning alongside you.

What we do have is experience building these applications and strong opinions about doing it well: testing, evaluation, cost management, code quality.

We welcome your contributions. If you find a better tool, a better technique, a better way to think about something — bring it to class.

Topics

The class is organized around 4 units, each dedicated to an application domain:

- Semantic text processing — classifying, extracting, summarizing text at scale

- Image/document processing — working with PDFs, photos, scanned documents

- Agents and tools — building conversational interfaces to existing systems

- TBD — to be shaped by what we learn along the way

Each units spans 2 or 3 weeks and follows a similar structure:

- ~3-5 lectures of material

- 1 assignment released at the beginning of the unit

- Demo & peer review session at the end of the unit (during lecture), where you present your assignment and review each other's work

Logistics

See homepage for details on lectures, office hours, assignments, and more.

Unit 1: Semantic Text Processing

Q: Think of examples of existing software that processes text. What operations does it perform on strings of text to categorize them or extract information?

Programs have always been good at processing text syntactically: filtering by keyword, matching a regex, parsing a fixed format.

But what if you need to understand what the text means?

- Is this support ticket urgent?

- Does this contract clause contain a liability risk?

- Does this social media post contain an account of police misconduct?

These tasks require semantic understanding — and until recently, the only way to do them at scale was to hire humans.

LLMs change this: they let you write programs that process text based on meaning.

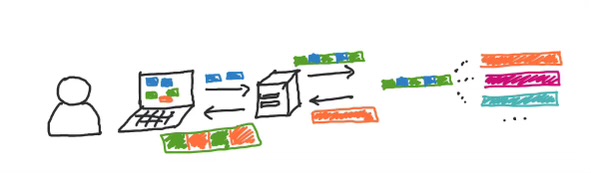

Data Sources LLM Processing Downstream

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ social media │──┐ │ │ ┌──▶│ dashboards │

│ documents │──┼──▶│ classify / │───────────────────┼──▶│ alerts │

│ support logs │──┘ │ extract / │ structured output └──▶│ ... │

│ ... │ │ summarize │ (labels, scores) └──────────────┘

└──────────────┘ └──────────────┘

This pattern is showing up everywhere: cloud data warehouses like BigQuery and Snowflake now let you call LLMs directly from SQL queries on text columns. It's becoming a standard part of the data engineering toolkit.

Motivating Example: BlueSky Poetry

What if we wanted to monitor all poems being posted on BlueSky in real time?

Data Source LLM Processing Downstream

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ │──┐ │ │ ┌──▶│ │

│ BlueSky │──┼──▶│ classify │───────────────────┼──▶│ CLI │

│ firehose API │──┘ │ (poetry / │ label └──▶│ │

│ │ │ not poetry) │ (boolean) └──────────────┘

└──────────────┘ └──────────────┘

^ our focus for these two weeks

Q: What makes something a poem? Could you use the string operations from your answer to the previous question to detect poetry programmatically?

Outline

- LLM APIs: calling LLMs programmatically

- Structured Output: getting JSON out of LLMs reliably

- Evaluation: measuring if the LLM is doing well

- Prompt Engineering: the craft of making the LLM do better

- Multi-Stage Pipelines: scaling up semantic processing

- Implementation: from design to code with coding agents

How do I call an LLM?

All of you have used LLMs through a web interface like ChatGPT or Google search.

But if we want to use LLMs to process text at scale, we need to call them programmatically (from our code).

There are two main options:

- Hosted APIs: a provider (OpenAI, Anthropic, Google, etc.) runs the model; you send HTTP requests and pay per token. Setup: create an account, get an API key, start making calls.

- Self-hosted models: you run an open-source model (e.g. Llama) on your own hardware. More control, but you manage the infrastructure.

In this class we'll use hosted APIs — they're the easiest way to get started and the most common in production. The basic idea of hosted APIs:

HTTP request

("Is this a poem?\n\nRoses are red,\nviolets are blue.")

┌───────────┐ ─────────────────────────────────────▶ ┌──────────────┐

│ your code │ │ LLM provider │

└───────────┘ ◀───────────────────────────────────── └──────────────┘

HTTP response

(LLM: "Yes")

Q: What are the pros and cons of hosted vs self-hosted?

Popular providers include OpenAI, Anthropic, and Google Gemini. You can use any provider in your assignments. UCSD also provides TritonAI which lets you access multiple providers from your UCSD account and gives you some free credits.

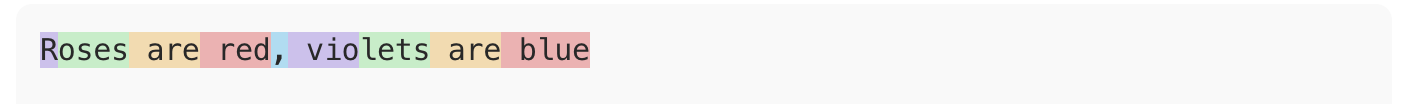

Tokens and Cost

LLM APIs charge by tokens — roughly ¾ of a word. You can see how OpenAI tokenizes text using their tokenizer web interface.

"Roses are red, violets are blue" → 9 tokens

Both input and output tokens count, and they're priced differently (output is typically more expensive — generating text is harder than reading it).

Here's what pricing looks like in practice (see full pricing):

| Model | Input | Output |

|---|---|---|

gpt-5-nano | $0.05/M tokens | $0.40/M tokens |

gpt-5-mini | $0.25/M tokens | $2.00/M tokens |

gpt-5.4 | $2.50/M tokens | $15.00/M tokens |

That's a 50x difference in input cost!

Other providers have similar tiers (e.g. Anthropic's Haiku vs Sonnet vs Opus).

Example:

BlueSky firehose produces ~1.4M posts/day. Let's assume each post is ~50 tokens on average, and we want to generate 1 token of output.

| Model | Input cost | Output cost | Total |

|---|---|---|---|

gpt-5-nano | 1.4M × 50 × $0.05/M = $3.5 | 1.4M × $0.40/M = $0.56 | ~$4.06 |

gpt-5.4 | 1.4M × 50 × $2.50/M = $175.0 | 1.4M × $15.00/M = $21.0 | ~$196.0 |

This is why model choice and pipeline design matter — we'll come back to this.

API Basics: Chat Completions

The core abstraction across all providers is the chat completion: you send a list of messages, the model sends back a response.

Here's how it looks in Python using OpenAI's API:

from openai import OpenAI

client = OpenAI() # uses OPENAI_API_KEY env variable

response = client.chat.completions.create(

model="gpt-5-mini",

messages=[

{"role": "system", "content": "You are a poetry classifier."},

{"role": "user", "content": "Is this a poem?\n\nRoses are red,\nviolets are blue."},

],

)

answer = response.choices[0].message.content

print(answer)

Q: What do you think the model will respond? (Let's find out.)

Anatomy of a Chat Completion

Messages have roles:

system: instructions to the model (persona, task description, constraints)user: the input you want the model to processassistant: the model's previous responses (used in multi-turn conversations)

Model: which LLM to use.

Response: the choices array contains the model's output.

For now we'll always get a single choice back.

Unified LLM APIs

We used OpenAI's API above, but what if we want to experiment with different providers? It would be annoying to rewrite our code just for that.

There are libraries/frameworks that provide a unified API layer over multiple providers; the simplest one is LiteLLM. It is very similar to OpenAI's API, but now we can switch e.g. to a model from Anthropic:

from litellm import LiteLLM

# No client initialization needed!

response = litellm.completion( # The only change is this line

# model="gpt-5-mini",

model="claude-haiku-4-5", # switching to an Anthropic model

messages=[

{"role": "system", "content": "You are a poetry classifier."},

{"role": "user", "content": "Is this a poem?\n\nRoses are red,\nviolets are blue."},

],

)

answer = response.choices[0].message.content

print(answer)

Outline

LLM APIs: calling LLMs programmatically[done]- Structured Output: getting JSON out of LLMs reliably

- Evaluation: measuring if the LLM is doing well

- Prompt Engineering: the craft of making the LLM do better

- Multi-Stage Pipelines: scaling up semantic processing

Structured Output

We called the LLM and got a response. Now what? We want to use that response in our code:

answer = response.choices[0].message.content

if answer == "Yes":

print("Found a poem!")

Q: Will this work? What could go wrong?

The problem with free-form text

The model might respond with:

"Yes""Yes, this is a poem.""Yes, this is a poem. It follows a classic rhyming pattern...""Certainly! This is indeed a poem."

All of these mean yes, but answer == "Yes" only matches the first one.

We could try answer.startswith("Yes") or "yes" in answer.lower(),

but this is fragile — we're back to syntactic text processing!

We need to tell the model how to respond, not just what to respond about.

Attempt 1: Ask nicely

The simplest approach: just add instructions to the prompt.

response = litellm.completion(

model="gpt-5-mini",

messages=[

{"role": "system", "content": "You are a poetry classifier. "

"Respond with only 'true' or 'false'."},

{"role": "user", "content": "Is this a poem?\n\nRoses are red,\nviolets are blue."},

],

)

answer = response.choices[0].message.content

print(answer) # "true"

This works... most of the time. But "most of the time" is annoying to deal with in code (you need error handling and retry logic).

We need more structure

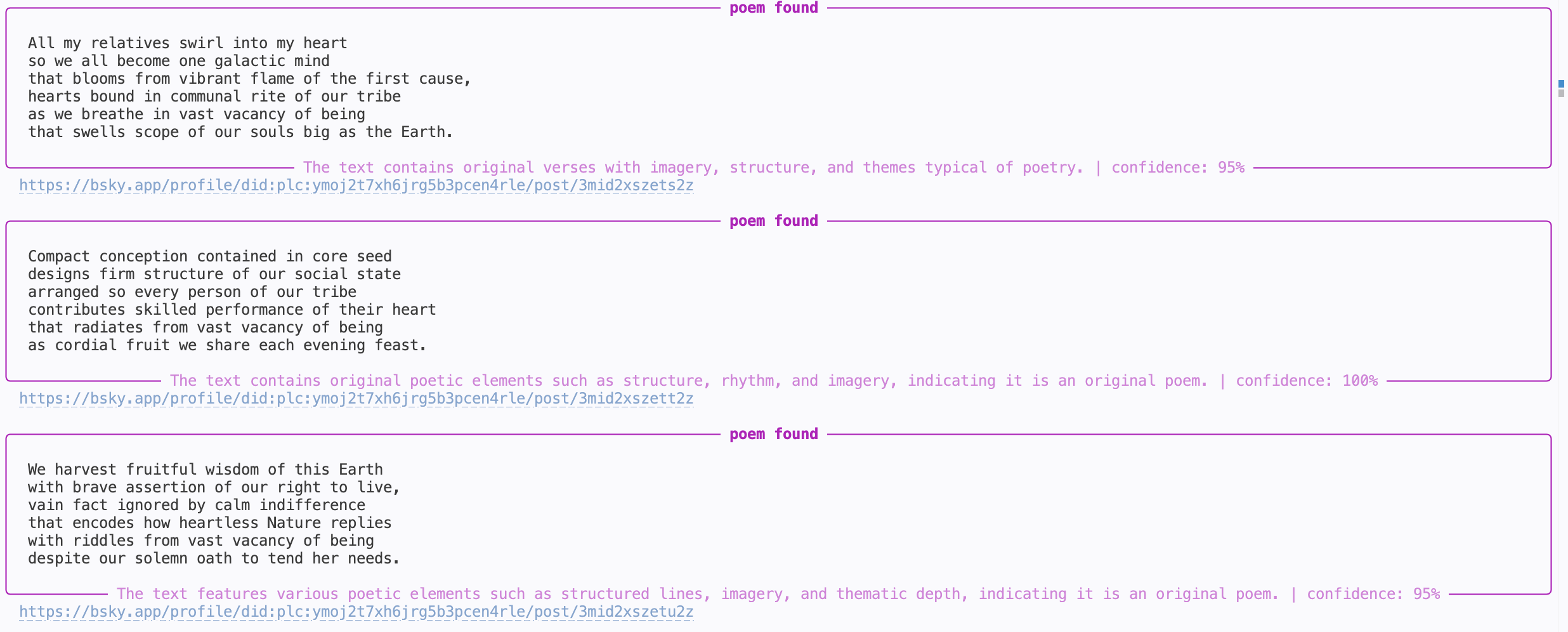

A single boolean is the simplest case, but real applications often need richer output. For our poetry monitor, we might want:

- Whether the post is a poem

- A confidence level

- Explanation of the model's reasoning

The industry standard for this is JSON:

{

"is_poem": true,

"confidence": 0.95,

"explanation": "The text follows a classic rhyming pattern with a consistent meter."

}

Q: Have you worked with JSON before? How would you parse this in Python?

JSON is convenient because:

- Every language has a library to parse it (

json.loadsin Python) - It has a well-defined schema (types, required fields, nesting)

- Modern LLMs are trained extensively on JSON and are good at producing it

Asking nicely for JSON

We can update our prompt to request JSON output:

response = litellm.completion(

model="gpt-5-mini",

messages=[

{"role": "system", "content": """You are a poetry classifier.

Respond with a JSON object with the following fields:

{

"is_poem": true/false,

"confidence": 0.0-1.0,

"explanation": "brief reason (<30 words)"

}"""},

{"role": "user", "content": "Is this a poem?\n\nRoses are red,\nviolets are blue."},

],

)

import json

answer = response.choices[0].message.content

result = json.loads(answer)

print(result["is_poem"]) # True

print(result["confidence"]) # 0.95

print(result["explanation"]) # "The text follows a classic rhyming pattern with a consistent meter."

This works well with modern models, but again works most of the time

(e.g. some models like to put backticks around the JSON, which breaks json.loads).

If you add response_format={"type": "json_object"} to your API call,

some providers will do their best to ensure the output is valid JSON,

but there is no guarantee about which fields it will have.

Attempt 2: Constrained decoding

Instead of asking the model to produce JSON and hoping for the best, we can force it. This is called constrained decoding:

- the API restricts the output to match a specified schema

- it also does a system-level prompt injection to tell the model about the schema

response = litellm.completion(

model=...,

messages=..., # NO NEED TO INCLUDE JSON INSTRUCTIONS IN THE PROMPT ANYMORE!

response_format={

"type": "json_schema",

"json_schema": {

"name": "poetry_classification",

"schema": {

"type": "object",

"properties": {

"is_poem": {"type": "boolean"},

"confidence": {"type": "number"},

"explanation": {"type": "string"}

},

"required": ["is_poem", "confidence", "explanation"],

},

},

},

)

answer = response.choices[0].message.content

result = json.loads(answer)

print(result["is_poem"]) # guaranteed to work

Now json.loads will never fail.

Constrained decoding is a deeper topic we may revisit later.

But for now, the practical takeaway is: use response_format

when you need reliable structured output.

OpenAI API also provides syntactic sugar that lets you parse the response directly into a Python object using Pydantic:

from pydantic import BaseModel

class PoemResult(BaseModel):

is_poem: bool

confidence: float

explanation: str

response = await client.beta.chat.completions.parse(

model=...,

messages=...,

response_format=PoemResult,

)

answer = response.choices[0].message.parsed

print(answer.is_poem) # bool

Outline

LLM APIs: calling LLMs programmatically[done]Structured Output: getting JSON out of LLMs reliably[done]- Evaluation: measuring if the LLM is doing well

- Prompt Engineering: the craft of making the LLM do better

- Multi-Stage Pipelines: scaling up semantic processing

- Implementation: from design to code with coding agents

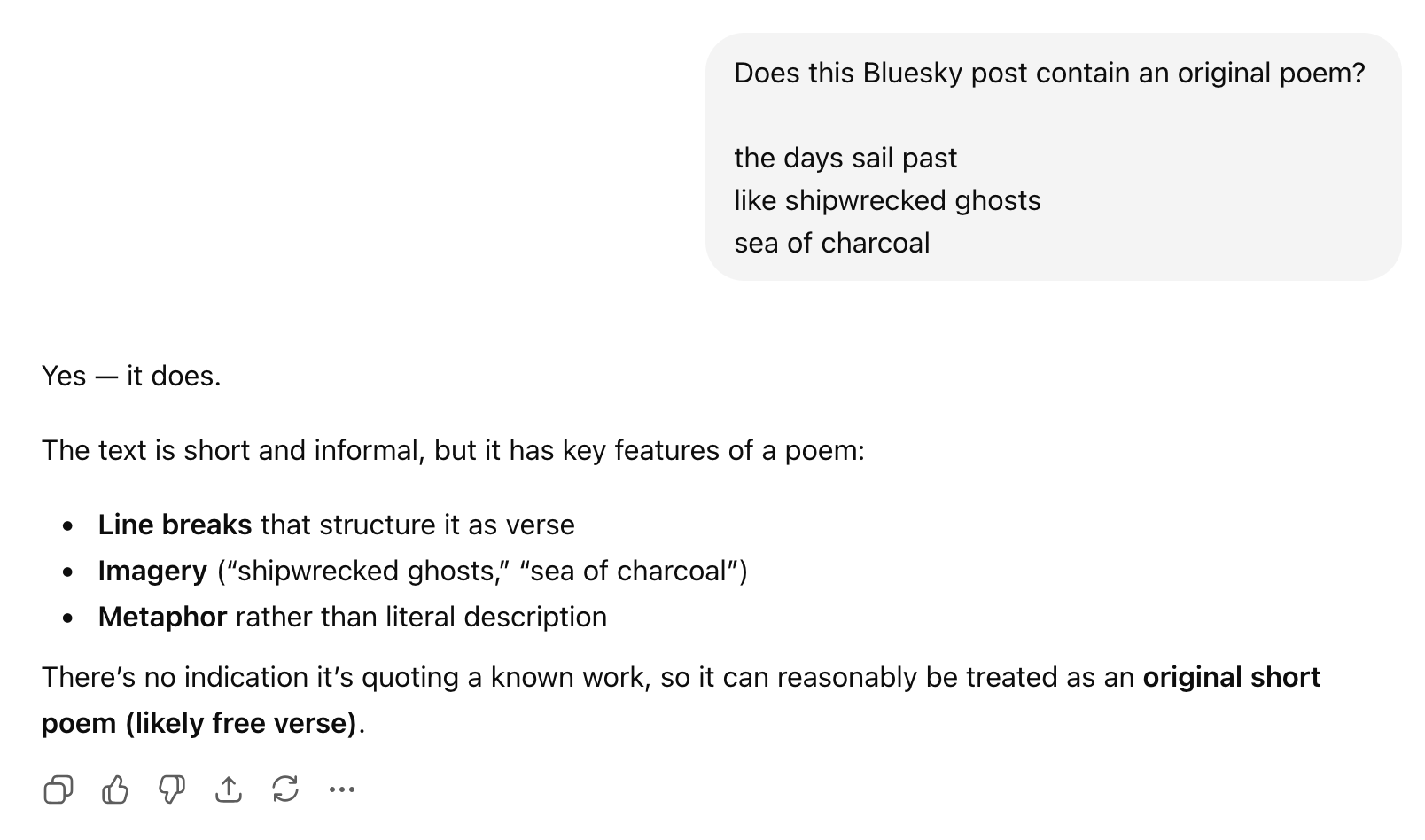

Evaluation

Let's put together what we have so far:

def detect_poem(text):

response = await client.beta.chat.completions.parse(

model="gpt-5-mini",

messages=[

{ "role": "system", "content": "You are a poetry detector. Given the text of a social media post, determine if it is a poem."},

"role": "user", "content": text }

],

response_format=PoemResult,

)

return response.choices[0].message.parsed

# In practice this would be async, but simplified here:

while True:

text = get_post()

result = detect_poem(text)

if result.is_poem:

print("POEM DETECTED!")

print(text)

print(result.explanation)

print("-" * 40)

Q: Are we done? How would you assess if this is any good?

The hard part has shifted

Insight: With modern LLMs, getting code that "works" is easy. You can go from idea to prototype in minutes.

The hard parts of building AI-powered systems are now:

- Measuring how well it works

- Making it work reliably at scale

This is a fundamental shift in software engineering.

What does evaluation look like?

Evaluation looks very different depending on the task:

- Classification (is this a poem? is this urgent?): compare against labeled examples

- Extraction (pull dates from a contract): compare extracted fields against known answers

- Generation (summarize this article, write a reply): harder — may need human judgment or LLM-based evaluation

Our poetry classifier is a classification task, so we can measure it by comparing its predictions against correct labels.

Evaluation datasets

We need a set of examples where we know the right answer:

| Post | Expected |

|---|---|

| "Roses are red / violets are blue" | poem |

| "Just had the best coffee of my life" | trash |

| "the fog comes / on little cat feet" | poem |

| "My cat is sitting on my keyboard again" | trash |

Q: Where would you get data like this for poetry detection? What are pros and cons of different approaches?

Options:

- Hand-label a sample from the firehose (most reliable, slow)

- Find existing datasets (poetry corpora exist, but may not match BlueSky style)

- Use a more capable model as oracle (fast, but introduces its own biases)

The important thing is that the dataset is representative of what your system will actually encounter.

Q: Can you combine these approaches?

Q: How many labeled examples do we need? 10? 100? 1000?

Too few and your metrics are noisy — one misclassification can swing precision by 10%. Too many and you've spent a week labeling BlueSky posts. In practice, 50–200 well-chosen examples is often a good starting point for a classification task. You can always add more later as you discover failure modes.

Metrics: precision and recall

Given an evaluation dataset, we can run our classifier and compare predicted and expected labels:

| Post | Expected | Predicted | Result |

|---|---|---|---|

| "Roses are red / violets are blue" | poem | poem | TP |

| "Just had the best coffee of my life" | trash | trash | TN |

| "the fog comes / on little cat feet" | poem | trash | FN |

| "My cat is sitting on my keyboard again" | trash | poem | FP |

- True positives (TP): poems our user will see

- True negatives (TN): trash no one will see

- False positives (FP): trash that slips through and annoys users

- False negatives (FN): poems our user will miss

From these:

- Precision = TP / (TP + FP) — "Of the posts the user sees, how many are actually poems?"

- Recall = TP / (TP + FN) — "Of all the poems out there, how many does the user see?"

In terms of user experience:

- Low precision → your feed is full of trash

- Low recall → you miss real poems

Q: Which matters more for our poetry monitor — precision or recall?

There's no universal right answer — it depends on what you're building and who it's for.

Running eval in code

We can now run our detector on the evaluation dataset and compute precision and recall:

import json

# Load evaluation dataset

with open("eval_data.json") as f:

eval_data = json.load(f)

# eval_data = [{"post": "Roses are red...", "expected": true}, ...]

tp = fp = fn = 0

for example in eval_data:

predicted = detect_poem(example["post"]).is_poem

expected = example["expected"]

... // accumulate TP, FP, FN counts ...

precision, recall = compute_metrics(tp, fp, fn)

print(f"Precision: {precision:.2f}")

print(f"Recall: {recall:.2f}")

Q: What would you want your eval infrastructure to have?

Building eval infrastructure

Things you probably want to do:

- We want to try different models, confidence thresholds, and prompts - need to separate classification from analysis

- Each API call costs money — need to cache classification results so you can iterate on analysis without re-calling the API (can also use batch APIs!)

- We want to compare experiments — printing numbers to the console doesn't scale, we probably want a notebook with plots and tables

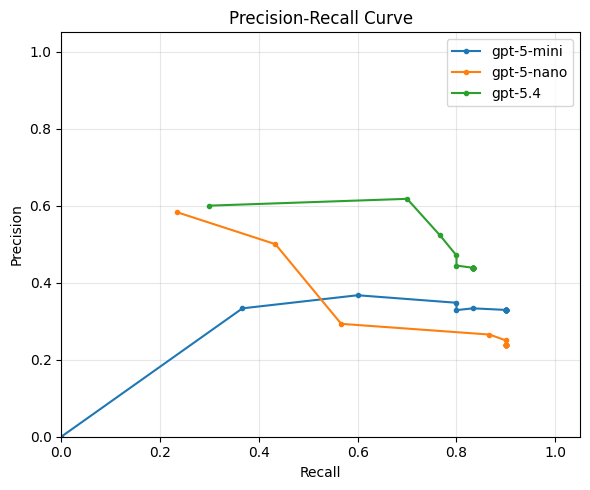

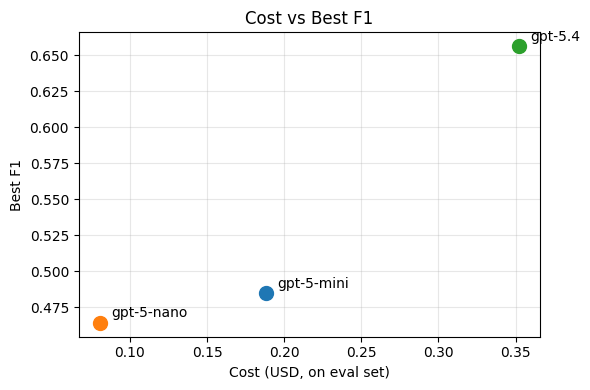

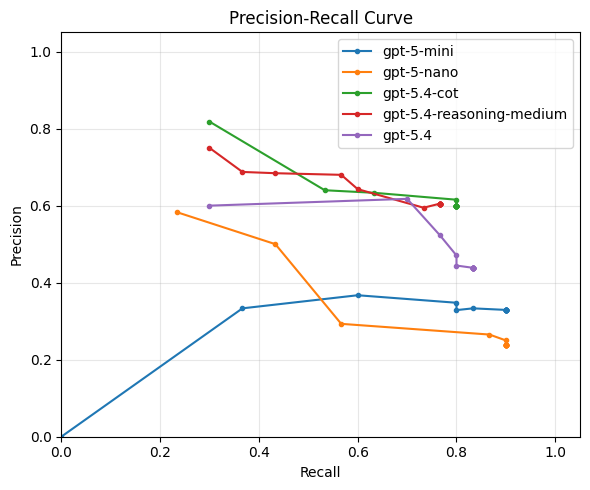

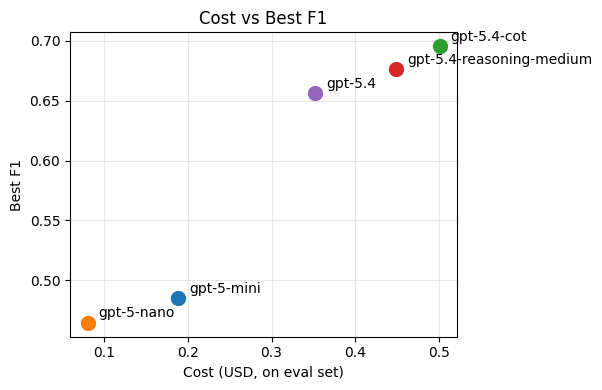

Visualizing the precision/recall tradeoff

Q: Looking at this plot, which model/prompt configuration would you pick? Why?

Precision and recall capture different aspects of quality, but sometimes we want a single number to compare configurations. The F1 score is the harmonic mean of precision and recall:

F1 = 2 · precision · recall / (precision + recall)

It ranges from 0 (worst) to 1 (best) and is high only when both precision and recall are high, which often makes it a useful summary metric for choosing among configurations.

There's often no single "best" configuration — you're trading off precision, recall, and cost. The right choice depends on your application and your users.

Outline

LLM APIs: calling LLMs programmatically[done]Structured Output: getting JSON out of LLMs reliably[done]Evaluation: measuring if the LLM is doing well[done]- Prompt Engineering: the craft of making the LLM do better

- Multi-Stage Pipelines: scaling up semantic processing

- Implementation: from design to code with coding agents

Now that we know how well we're doing, we can start thinking about how to do better (in terms of both quality and cost).

Q: What can we tweak in our call to the LLM to improve the quality of our poetry detector?

Some options:

- Change the prompt

- Add more precise instructions

- If you have a complex condition (e.g. "either rhymes or is a haiku"), ask about each separately and then combine the results in code

- Add a persona to the system prompt (e.g. "You are a literary critic with a deep knowledge of poetry.")

- Add few-shot examples of poems and non-poems

- Use prompting techniques like chain-of-thought to encourage the model to think before answering

- Use a more capable model (e.g.

gpt-5.4instead ofgpt-5-mini) - Change model parameters

- Older models had "temperature" to control randomness

- Newer models have "reasoning effort" to control how hard the model thinks before answering

All of this tweaking is part of the black art of prompt engineering. Historically, this term only referred to tweaking the prompt, but now it increasingly encompasses model parameters too.

We are not going to spend much time on this, because it's a black art and not science.

- If you want to learn about advanced prompting techniques, check out the Prompting Guide

- There are also tools like DSPy that automatically optimize your prompt (e.g. by adding few-shot examples to maximize performance on your eval set)

Just out of curiosity, let's see if can improve on our best performing baseline, gpt-5.4, by:

- Adding medium reasoning effort

- Adding manual chain-of-thought by asking the model to explain its reasoning before giving the answer (instead of after)

Outline

LLM APIs: calling LLMs programmatically[done]Structured Output: getting JSON out of LLMs reliably[done]Evaluation: measuring if the LLM is doing well[done]Prompt Engineering: the craft of making the LLM do better[done]- Multi-Stage Pipelines: scaling up semantic processing

- Implementation: from design to code with coding agents

Does our poetry detector scale?

┌──────────────┐ ┌──────────────┐

│ │──┐ 1.4M │ │

│ Bluesky │──┼─────────▶│ LLM │──▶ ...

│ firehose API │──┘ posts / │ (poetry / │

│ │ day │ not poetry) │

└──────────────┘ └──────────────┘

If we ran our current best-performing implementation in production,

we'd be calling gpt-5.4 1.4 million times per day.

This is:

- expensive

- slow

- and likely unnecessary (most BlueSky posts are obviously not poems)

Multi-stage pipelines

Idea: use cheap pre-filters to filter out obvious trash!

Q: What criteria would you use to filter out obvious non-poems?

Some ideas:

- Filter by metadata (e.g. language, if we only want English poems)

- Shape-based filter (min 3 lines? limit average line length? no bullet points? etc.)

- Keyword-based filter (could be used as a negative filter for poetry)

- Not for poetry, but for other tasks: filter by similarity to known-good examples using a cheap embedding model

- Use a cheaper LLM (e.g.

gpt-5-nanoorgpt-5-mini) with a high-recall setting - Use a simpler prompt with a high-recall setting

Pipeline design

A reasonable pipeline for the poetry detector might look like this:

1.4M posts/day

│

▼

┌───────────────────┐

│ Metadata filter │ > 20 chars, language = en $0

│ │

└─────────┬─────────┘

~60% │ 840K posts/day

▼

┌───────────────────┐

│ Shape filter │ ≥ 3 lines, avg line < 60 chars $0

│ │

└─────────┬─────────┘

~8% │ 67K posts/day

▼

┌───────────────────┐

│ Profanity filter │ keyword-based $0

│ │

└─────────┬─────────┘

~90% │ 60K posts/day

▼

┌───────────────────┐

│ Cheap LLM filter │ gpt-5-mini, threshold 0.8 ~$1

│ │

└─────────┬─────────┘

~10% │ 6K posts/day

▼

┌───────────────────┐

│ Smart LLM filter │ gpt-5.4 ~$1

│ (poetry / not) │

└─────────┬─────────┘

│

▼

poems!

By adding cheap pre-filters and a two-tier LLM strategy, we reduced the expensive LLM calls from 1.4M to ~6K per day — and the total pipeline costs roughly ~$2/day instead of ~$196/day.

Outline

LLM APIs: calling LLMs programmatically[done]Structured Output: getting JSON out of LLMs reliably[done]Evaluation: measuring if the LLM is doing well[done]Prompt Engineering: the craft of making the LLM do better[done]Multi-Stage Pipelines: scaling up semantic processing[done]- Implementation: from design to code with coding agents

Vibe Coding vs Co-Design

We know what we want to build; now what? You'll probably build it with a coding agent — and a system this size is small enough to build in a single session.

Should you just vibe code it?

Vibe coding: describe what you want in natural language, let the AI generate code, and accept the result without fully reading or understanding it.

Recent studies paint a clear picture:

- Vibe coding is fast but flawed: practitioners are drawn to the speed, but 68% perceive the resulting code as fragile or error-prone, and 36% skip quality assurance entirely (Fawzy et al., 2025).

- Professional developers don't vibe — they control. In a study of 99 experienced developers, not a single one said agents could replace human decision-making. Instead, they plan before coding, review every change, and leverage their SE expertise to supervise the agent (Huang et al., 2025).

In this class we teach you to co-design software with coding agents: you bring the design thinking — abstraction, modularity, and engineering judgment — and the agent brings the speed.

Starting point

Here's our simple script that listens to the BlueSky firehose and classifies every post:

async def listen_to_websocket() -> None:

async with websockets.connect(uri) as websocket:

while True:

text = await get_post(websocket)

result = await classify_post(text)

if result.is_poem:

print(text)

Now we want to turn this into a real system that implements our multi-stage pipeline design. You hand this to a coding agent and say "add the pipeline stages we discussed." Here is some code it might produce.

Snippet 1: The pipeline

async def process_post(text: str) -> Classification | None:

# Stage 1: metadata filter

if len(text) < 20 or detect_language(text) != "en":

return None

# Stage 2: shape filter

lines = text.strip().split("\n")

if len(lines) < 3 or avg_line_length(lines) > 60:

return None

# Stage 3: profanity filter

if contains_profanity(text):

return None

# Stage 4: cheap LLM

cheap_result = await classify_with_llm(text, model="gpt-5-mini")

if cheap_result.confidence < 0.8:

return None

# Stage 5: smart LLM

return await classify_with_llm(text, model="gpt-5.4")

Q: What's wrong with this?

Problem: no abstraction over pipeline stages

I see ghosts of unnamed abstractions!!!

Every stage is a different function with a different signature, and the pipeline logic (the sequence of filters) is tangled with the filtering logic.

What if we want to:

- Reorder stages? We have to move code blocks around.

- Add/remove a stage? We edit the middle of a function.

- Reuse a stage in a different pipeline? We copy-paste.

- Test a single stage in isolation? Awkward.

Better design: make "pipeline stage" an explicit abstraction.

class Stage:

"""A single stage in the classification pipeline."""

name: str

def matches(self, text: str) -> bool:

"""Returns True if the post should pass through to the next stage."""

...

class MetadataFilter(Stage):

name = "metadata"

def matches(self, text: str) -> bool:

return len(text) >= 20 and detect_language(text) == "en"

class ShapeFilter(Stage):

...

class LLMFilter(Stage):

def __init__(self, model: str, threshold: float):

self.model = model

self.threshold = threshold

async def matches(self, text: str) -> bool:

result = await classify_with_llm(text, self.model)

return result.confidence >= self.threshold

Now the pipeline is just a list:

pipeline: list[Stage] = [

MetadataFilter(),

ShapeFilter(),

ProfanityFilter(),

LLMFilter(model="gpt-5-mini", threshold=0.8),

LLMFilter(model="gpt-5.4", threshold=0.5),

]

Configurable, testable, extensible — all because we named the abstraction.

Snippet 2: The main loop

async def listen_to_websocket() -> None:

async with websockets.connect(uri) as websocket:

while True:

text = await get_post(websocket)

result = await run_pipeline(text, pipeline)

if result is not None and result.is_poem:

print(text)

Q: What's wrong with this?

Problem: we're processing posts one at a time

The firehose produces ~16 posts/second. Each LLM call takes ~0.5–2 seconds.

We're await-ing each post before reading the next one —

which means we fall further behind with every second.

Better design: decouple reading from processing with a producer-consumer pattern.

queue: asyncio.Queue[str] = asyncio.Queue(maxsize=1000)

async def producer(queue: asyncio.Queue[str]) -> None:

"""Reads posts from the firehose and enqueues them."""

async with websockets.connect(uri) as websocket:

while True:

text = await get_post(websocket)

await queue.put(text)

async def consumer(queue: asyncio.Queue[str], pipeline: list[Stage]) -> None:

"""Takes posts from the queue and runs them through the pipeline."""

while True:

text = await queue.get()

result = await run_pipeline(text, pipeline)

if result is not None and result.is_poem:

print(text)

async def main() -> None:

queue = asyncio.Queue(maxsize=1000)

async with asyncio.TaskGroup() as tg:

tg.create_task(producer(queue))

# Multiple consumers to parallelize LLM calls

for _ in range(10):

tg.create_task(consumer(queue, pipeline))

Now we have one producer filling the queue, and 10 consumers draining it in parallel — each one independently making LLM calls without blocking the others.

Unit 2: Document Scanner

In the first unit, we talked about how LLMs make previously challenging tasks involving the semantics of text tractable and cheap. The interpretive abilities of GenAI systems continue to impress in domains beyond plain text-based understanding -- next we will revisit the capabilities we can expect from our programs in working with rich documents and images.

People have a broad range of PDFs, images, presentations, spreadsheets, and so on in their workflows. Until now, working with documents in these formats required tools like:

- Specialized parsers and editors for each file format

- Models trained for a specific task (e.g. OCR or “optical character recognition”, recognizing faces or people in images)

While tools like these are still useful, many use cases can be served by sending files to a LLM and having it extract structured data. ChatGPT and Claude explicitly advertise this feature, for example, and applications are showing up to summarize notes, do professional workflows like quotes and billing, people talk about using ChatGPT to review their tax returns, and it's clear that AI systems can do basic image recognition and text extraction tasks.

Motivating Example: Track Spending on Paper Receipts

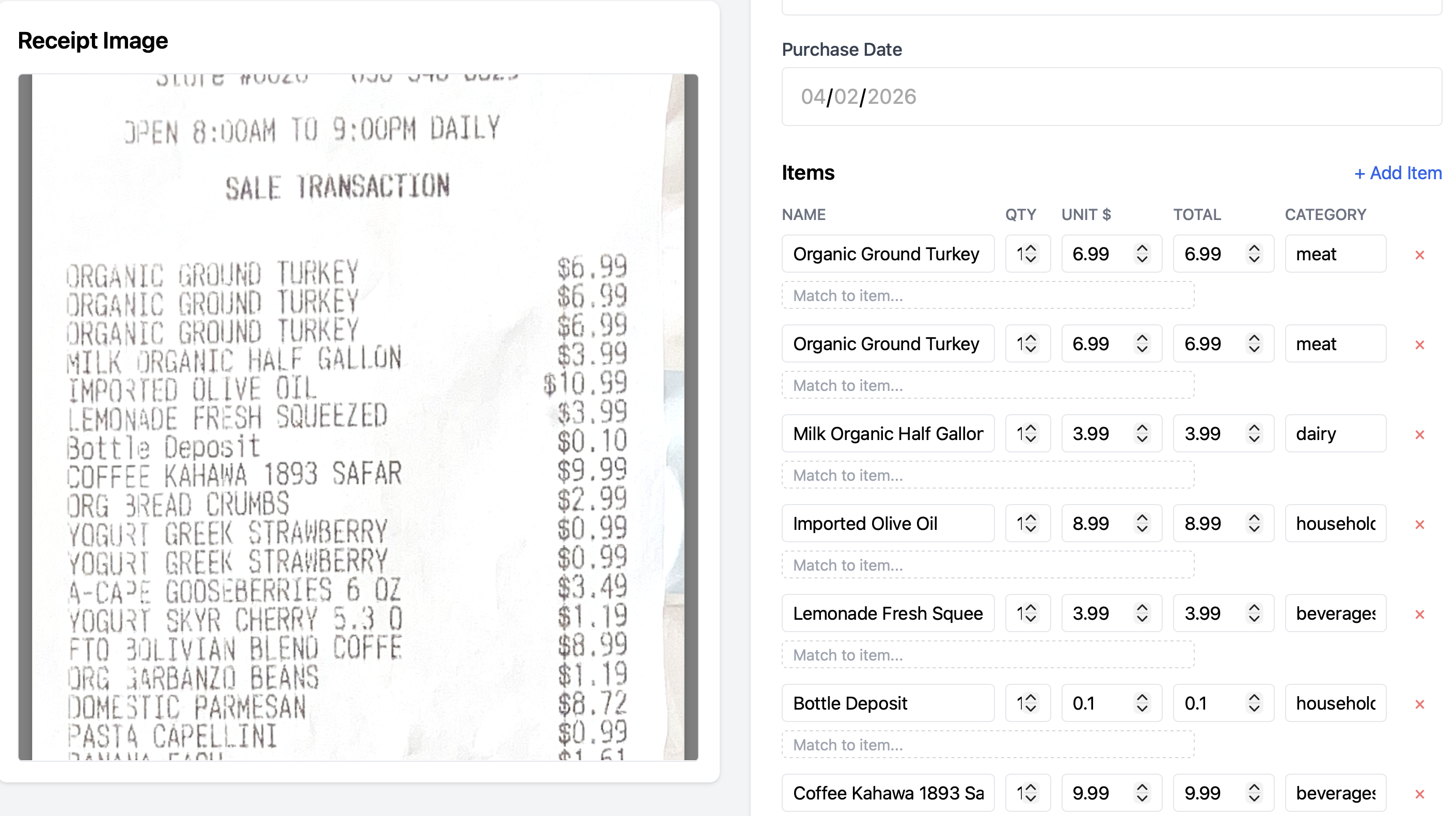

What if we wanted to track our spending (on groceries, clothes, etc), but we mostly have paper receipts, not digital transactions? It would be useful to have a scanning app that can take photos of our receipts and recover structured data from them, and then put the user in the loop to correct errors and provide guidance on categorization.

Q: What mistakes do you see in the above structured data?

Data Source LLM Processing User Review Downstream

┌──────────────┐ ┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ │ │ │ │ │ │ │

│ photos / │─────▶│ extract │──────────────────────▶│ verify / │─────▶│ store / │

│ scans / │ │ structured │ structured output │ correct │ │ aggregate / │

│ PDFs │ │ data │ (items, totals, │ │ │ visualize │

│ │ │ │ dates, ...) └──────┬───────┘ └──────────────┘

└──────────────┘ └──────────────┘ │

▲ │

│ user corrections, │

└────────── categories, metadata ─────┘

Outline

- Mixed-media APIs: passing images and PDFs alongside text

- Working with an agent: pushing back on defaults, keeping project context, and building the system at a pace you can internalize

- Types as design: using types to structure abstractions and communication with an agent

- Putting the user in the loop: corrections and categories feeding back into extraction

Mixed-media Queries

Modern models support mixes of text, images, and other inputs. Passing images directly with a request is broadly available:

- OpenAI: Giving a model images as input

- Claude: Base64-encoded image example

- Gemini: Passing inline image data

Similar APIs exist for documents like PDFs (Gemini docs), and even speech, audio, and video.

Documents and images are an extremely broad category of data, ranging from candid photos to cartoons to artwork to structured data like receipts (above) to academic papers and more. Each application that works with documents semantically likely picks a subset and either looks for or imposes some structure on them.

For these notes, we're going to focus on a particular kind of structured document: images of paper receipts like the one above. We will build a web application, targeted at fairly general users, for doing this.

We will make a few assumptions; a truly mass-market app would probably need to generalize these eventually. In particular, we'll assume a US/English context, so expect $USD on receipts, and no language options. We'll build it as a web application that could eventually make its way into a phone app.

Outline

Mixed-media APIs: passing images and PDFs alongside text[done]- Working with an agent: pushing back on defaults, keeping project context, and building the system at a pace you can internalize

- Types as design: using types to structure abstractions and communication with an agent

- Putting the user in the loop: corrections and categories feeding back into extraction

Starting from Scratch with an Agent

Let's start from an empty directory, and just the discussion above to guide us. My current favorite coding assistant is Claude Code; I'm curious what progress we can make quickly, and what decision points come up.

Q: What are some decisions you expect to have to make?

There are a few strategies we could use for working with the agent. We could just write a short sentence saying what we want:

That's a lot of decisions to make! Also, Claude doesn't always give us the same options; small changes in what we say produce different reactions:

Q: What are some differences? How might they affect the long-term trajectory of the app?

Q: Which of the mentioned tools have you heard of? Which haven't you heard of?

Q: How do these responses make you feel?

The prompt we gave is a good one for getting a sense of the landscape of the application. However, it's a bad one for having Claude do work. In particular I'm extremely suspicious of “Let me know and I'll get building” in the second interaction -- I am nowhere near ready to “build” all of that!

One thing we have done with this interaction is learned that we need to think about a few things. We may not have thought of these, or maybe we did, depending on our experience as developers of this kind of application.

- The frontend (the stuff that runs in the user's browser)

- The backend (the stuff that runs on a server somewhere)

- The storage (the place where user data is persisted on disk)

- The GenAI part (the Image -> ReceiptData function)

As an engineer, my preference is to try to build things incrementally. This is what Claude is hinting at with the “MVP” in the first interaction (jargon meaning “Minimum Viable Product”). I tend to find it useful to work on components I know well, and then for anything I don't know, see how it connects to the component I understand well.

Given where we are situated in this class, we can assume we know something about building the GenAI part: we need to set up API tokens, make API calls to a model with prompts and responses, think about evaluation and test inputs, and so on. We can keep in mind that this will be in the context of a larger application as we do that.

So let's proceed with that in mind:

Two things happened here:

- I made a choice to use a JavaScript-focused implementation (TypeScript). This is a bit of a matter of taste: I have experience with it, I like static types, but also it's useful in the context of this class to show that even if you were more comfortable with Python, Claude is perfectly comfortable with TypeScript.

- Claude rushed into trying to set up a whole TypeScript project, ending on a

prompt to run

npm init, which is the setup command for creating a Node.js project. I'm not ready for that!

Pushing Back

So, I decided to tell Claude that it should not do this anymore!

❯ I would like to know more about what you're going to do

and why. Can you write out the plan for this part to a

receipt-design.md file?

⏺ Write(receipt-design.md)

⎿ Wrote 81 lines to receipt-design.md

1 # Receipt Parser — Design

… +71 lines (ctrl+o to expand)

Recalled 1 memory, wrote 3 memories (ctrl+o to expand)

⏺ Written to receipt-design.md. The highlights:

- Claude vision + tool use to extract structured receipt

data in one step [...]

Take a look and let me know if you'd like to change

anything before I start building.

❯ What memories did you just write?

⏺ I saved one feedback memory: "Explain before coding" — a

note that you want me to present the design and reasoning

before jumping into implementation, based on you stopping

me when I started running commands without explaining.

❯ Oh interesting. Can you put that in Claude.md?

Searched for 1 pattern (ctrl+o to expand)

⏺ Write(CLAUDE.md)

⎿ Wrote 3 lines to CLAUDE.md

1 # Project Guidelines

2

3 - Present the design and rationale before jum

ping into code. For any non-trivial piece of

work, explain the approach and get buy-in bef

ore implementing.

⏺ Now I'll remove the memory version since CLAUDE.md is the

better home for it — it's loaded automatically and doesn't

need the memory system.

⏺ Bash(rm

/Users/joe/.claude/projects/-Users-joe-src-receipt-sca

nner-demo/memory/feedback_explain_before_coding.md)

⎿ Done

Recalled 1 memory, wrote 1 memory (ctrl+o to expand)

⏺ Done. The guideline is now in CLAUDE.md at the project

root and the memory entry has been cleaned up.

There's a few things that just happened, mostly having to do with my workflow preferences. You might have different ones, but you should have some. Coding agents come with defaults that push us along a particular workflow – you do not have to and should not accept this default without questioning it.

Here's what happened and why I did it:

- I asked it to save the design to a file rather than just having it in chat. Now I can commit and push the design, more easily share it with teammates, refer to it in a new session, etc.

- I had it save the design file in the repository. Claude has its own

notion of state (I've seen it make plan files in

~/.claude). For some workflows that is probably good... but if I code on another computer (or my teammate does), we and our agents benefit from access to it. - I pushed back on Claude's bias towards implementing. Not only do I not want it to do so here, but I don't want it to do so in general on this project! One thing that is cool is that Claude picked up on my pushback without me writing a separate message: in other interactions I've had to say more explicitly “I don't like it when you prompt me to generate code right away. Remember this preference.”

- I noticed (paying attention to the output) that Claude saved it to a

“memory” and asked about it. Now, I really dislike how memories get

configured! Memories are stored in state local to the user, again in

something like

~/.claude/projects/.../memory/.... As an engineer, I want (most of) my settings to be per-project and version controlled, not per-machine or per-user. - I pointed this out to Claude and it moved it for me and “admitted” that

CLAUDE.mdwas a better place for it! My take on this line—“CLAUDE.md is the better home for it”—is not that Claude “thinks” this is “globally true” or anything (if it did it wouldn't have put it in a memory). Rather, it's agreeing with me; these tools tend towards agreeing with us because

- it would be way more frustrating if they didn't

- different programmers do have preferences and good arguments that conflict, so the tool follows your lead, and

- likely some indirect effects of it making us feel good and continue to use the product

Q: Have you used memories or CLAUDE.md or the equivalent for your favorite agent?

Q: Have you ever felt “rushed along” by your coding agent?

Q: What other strategies have you used to manage design documents with your coding agent?

Building, Designing, and Learning, with Claude

With that useful setup work done, let's move on to building this application. As we do so, we're going to be reflective about what we are getting out of the agent, and where we should be pushing back.When I work on a new project, I find that I do a lot of learning about the system I'm building. One of the best ways to develop that understanding is to build incrementally. Claude listed a lot of things we need – persistent storage, a frontend, a backend, the AI subsystem for parsing, and so on. Some of these we may understand better than others. Let's build one at a time; I'm going to start with the AI subsystem, and then later build the user experience on top of it.

Notice that I'm not trying to fully engage with the broad design file yet. It's useful context, but I want to build one step at a time.

Q: What's a good prompt to move forward with that? What information is the agent likely to ask for in a followup?

Let's try something:

This churns for a while and produces a running starting point.

A few things to notice:

- I provided the sample code for using the TritonAI stuff, and the docs page for the models

- I didn't provide a ton of information about what I wanted out of the CLI. I could have done a lot more work to spec this out! But also it's useful to see something working (e.g. can these vision APIs do what I want with a receipt?)

Along the way:

- I provided a sample image (similar to the one in the screenshot above) to test on when prompted by Claude. I just copy-pasted a photo I had of a receipt in my house.

- Claude got error messages relating to the model. I realized I had given out-of-date model information and 401 errors came back. Claude itself went and used the discovery API on TritonAI by itself, and figured out which models should be used.

- For now it chose claude-opus, which is rather self-serving of Anthropic 🙃, and is fairly expensive. We may want to revisit this.

- Claude got TypeScript error messages. It had not included some dependencies

(like

dotenv) and it made a few small errors about JavaScript/TypeScript setup. It fixed them and rewrote code.

We should take a moment to acknowledge how impressive this is! My sample was in Python, I asked it to adapt it to TypeScript and test it out, and it generated a running example. It took about 2-3 minutes. I'm a reasonably competent typist (though I'm not winning any words per minute awards) and at least a median tool user, and getting something like this set up takes me much more than 2-3 minutes!

Of course, along the way Claude made a ton of design decisions without consulting us. It picked a system prompt, picked default categories for items on receipts, picked data representations for items, and more.

Q: What should we do next?

Q: Look at the commit Claude made. What is a design decision you agree with? One you don't agree with?

My choice was to next do some work on eval. I want to know where the good and bad receipt parsing is. If you're interested, here's a long-ish capture where I go through that (for a more casual reading, you don't have to look at the whole thing):

The summary was this:

- I had a handful of personal receipts to use as eval data

- The Opus model is fairly trustworthy – a good way to make test data was for me to run the script with opus and check the difference to the receipt myself.

api-mistral-small-3.2-2506made more mistakes, but is dramatically cheaper and faster. So my general strategy is to get close-to-ground-truth data with opus, and then eval that data against other models- The definition of a “match” is tricky: models are non-deterministic and

sometimes return a string like

"YOGURT STRAW BANAN"vs"6 Pack Yogurt Straw Banana"or other variations. I went back and forth with Claude on different choices of fuzzy string matching to get something plausible - It seems unlikely the models will usefully get the human item name that a

user wants, and noise is inevitable. Receipts have line items for things like

bottle deposits or discounts, weird abbreviations like

VNYANDARC(which refers to vineyard for a bottle of wine), and more. We can improve this long tail with prompting and smart logic, but this kind of thing is where the user comes in.

You can see the relevant changes at this commit

Outline

Mixed-media APIs: passing images and PDFs alongside text[done]Working with an agent: pushing back on defaults, keeping project context, and building the system at a pace you can internalize[done]- Types as design: using types to structure abstractions and communication with an agent

- Putting the user in the loop: corrections and categories feeding back into extraction

A User Interface

We know the AI part of the system can be made to work; there's more eval to do,

we probably want to try more cameras and stores and scan images with more junk

in the background, etc, but it's plausible. Next we can build an application

around this. We could make a lot of decisions about how we want to do that. We

could build an app (like installable via the app store), a standalone program

(like with an installer .exe or .dmg), a web application, and more.

Implementing a Server

I'm choosing a web application here, but the other options are fine and come with their own tradeoffs. We will want a server that stores persistent data and a pile of JavaScript on the frontend that can talk to and render the information stored in the server. I have a strong preference for starting from data definitions, so let's do that:Q: What do you think about the proposed schema?

I see one thing wrong immediately: it's super bad practice to store money as floating point! We never want to do arithmetic on prices and see a price like 0.6000000001. Standard best practice is to store a whole number of cents as an integer. This is a clear place where averaging over all the code has probably given Claude a bad completion. Let's see how it reacts!

Notice that Claude is quick to agree with us calling it out. We can actually convince Claude of many things if we try. A useful (wrong, but useful) mental model is that it's giving the most likely autocomplete of a developer who is quite competent across a breathtaking array of technologies, but exists only to help you write stuff. That completion includes “you're right!” when you tell it about a well-known programming pattern.

Also, Claude is being pushy, saying “start building” again. That's annoying. Let's tell it not to be, and that we'd like to think about the design. Only then do we want to go on.

Below we make that update to the config and then look at the planned spec. Our goal is to get to the request handling part of a web server and the database that stores the data. My goal here is to get to the point where I can talk about types. By that I mean TypeScript types and database schemas that have field names, relations, and so on.

Q: What do you think of the types shown in the editor at the end? Are they what you expected? What questions do you have about them?

Personally, I'm wondering which of these are storage types and which of these

are logical or operational types. As in, will all three of these get stored

in the database? They were put in db.ts. I also want to go further and see

where the types will be used. Before code gets generated, this really helps me

get a picture of what is going to go where.

I also tell Claude to clean up the interface. I want my TODOs and plans and other things in files, not in transient session state in Claude's UI.

Here we are getting to some sketches of code. I again want to know what the story is with types. Generally I need type-level data to think about abstractions, what data goes where, and so on. So I'm consistent about asking Claude for it. In particular I want to know what kinds of data I can expect to see (and autocomplete in my own editor!) on the requests, and what should go back in each response.

So I prompt for that, and end up learning a lot about the API in the process:

Q: Describe the kind of error I was worried about with incremental edits. What's an example of what it would look like to make that mistake and accidentally delete stuff?

Q: Is what Claude said about the middle type parameter accurate? How would you know?

There's some deliberate learning and engineering happening here:

- It's quite easy for programming with generic

requestandresponseobjects to degenerate into programming against loose JSON-like structures. I'd like to get from the HTTP types into better checked types as soon as possible. - The types help me talk about abstraction boundaries, AND should help Claude talk about them as well. If we keep using the same types for different functions, etc, it's literally fewer tokens than trying to think about a JSON schema over and over. And we can more easily write these type names into documentation, etc.

- I was honestly not sure how TypeScript and Express interact. I've done some Express programming (the library that sets up the routes and the req/res pairs), but never typed. So I was legitimately learning what that API does. I know that TypeScript types in many places document assumptions rather than enforcing a type, so that wasn't surprising to me (though it may be to you!), and I wanted to think about where those boundaries are in this code.

In cases like these it's helpful for me to not be passive – Claude's default

choices are not the ones I want. I'm still saving a lot of time and typing (I

never opened a docs page for Express, for example). I'm thinking a little bit

about if I can clean up these types at all, what validation will look like,

what zod is, and so on. Those are all good things for me to think about to

learn more about the system I'm building.

Overall I think I grok what's going on, so I'm happy moving on.

Here I have a useful conversation about logic and representation, and the type-based thinking helps me out a lot. I am very skeptical of Claude's choice to implement receipt update as “delete and re-add everything.” That just seems wrong and like it will eventually break some relationship between database tables, end up being very inefficient in a large batch update someday, etc. So I push back, and we decide that the receipt items can have their own notion of identity.

Then we start doing a bit of API design – what should an “edit” to a receipt look like? We settle on a standard operator-based update description, where the receipt updates can give a set of `ItemAdd | ItemUpdate | ItemDelete` operations that describe what to change. This is a huge improvement, opens up possibilities of having undo later (if we persist the changes), makes the API more flexible and allows just referring to small, specific edits rather than reconstructing the whole receipt every time, etc.So now we have a decent sense of two components' types:Q: Have you ever seen operations expressed in a datatype like this before?

- The request handlers, which get HTTP requests from the user (presumably their browser, but also testable from the command-line or from programs), and respond with JSON

- The db functions, which will store and retrieve things from the database, and so some work to apply logic around updates

There seems to be a sensible place from which to get some code down. One observation I'd like to make – this point is often where, as a student, you would get the "specification" as a programming assignment writeup with type signatures and start filling in "implementation". Look at how much work we've done (and the course staff for your courses have likely done) to decide all of this! What gets a type, what interface will work well or be error prone, what technology to use, and so on. A useful analogy for students who are experiencing this shift to agent-based coding is that if you can write a high-quality programming assignment writeup, you can probably write reasonable specs for agents.

Those skills have always been important, but now they are central.

Q: As you watch, think about this: What were some other options for how to refactor

parseReceiptto accept theBufferrather than a path?

As Claude goes through the implementation, I have some coaching to do.

- Because of the signature

parseReceipt(imagePath, model)API we set up for extraction, Claude aims for a path-based input. This requires saving to a temp file (which thismulterlibrary, which I just learned about, handles). But that's annoying, I would rather not save a file just to read it back into memory one step later. I have to nudge Claude to think this through, and we do an API update to make it reasonable to pass this through. - I get a little testy with Claude because it seemingly ignored some of my remarks about memories and Claude.md earlier, so I make it listen to me. I also try to tone down the empty praise.

With this, we have an implementation that's worth testing. I want to move on to writing enough tests to make sure this all runs, then we can hook it up to a browser-based frontend.

More learning about the available APIs. Also we add a new API endpoint for testing that we have a pretty good argument is good for our future selves as well – we can send receipts as JSON data to create them instead of just parsing them. Claude makes a good point that we may want a manual receipt-input interface, so the endpoint is just generally useful.

I'm very happy that Claude is writing this test code and not me. I've written a lot of test code like this in my life and... I see no major personal loss in never doing it again. I can see what it's doing, I know how to add more tests as we go along, I appreciate the confidence that everything runs, and feel good about moving on.

It's worth taking a bit of time to do some cleanup and write a few more tests. I want to keep the design document up to date, and make sure we test the various flavors of update at least a little bit before moving on:

Implementing a Frontend

We now have all the logic for parsing and storing receipts exposed through a web server. We've made a number of technology decisions and have basic tests in place to make sure we can do all the receipt work programmatically. Next is to build the user interface. I go into this with an attitude of keeping things simple; mainly I want to see how to connect a frontend with incremental user corrections to the infrastructure we have.

This is an interesting case where my experience directly comes into play, and Claude missed what I think was a straightforward, good idea. IndexedDB is a standard feature in browsers for storing reasonable-sized data (hundreds of MB), so it's a natural choice rather than storing images in memory and losing them on page navigation. Of course, this is a place where I have particular expertise, so I can see missing this (and maybe it will cause problems later that I don't know about).

This is one of those cases where a non-expert would have “unknown unknowns” – there was no indication there were options other than the ones Claude suggested. This suggests that in situations where we're unhappy with Claude's suggestions, it can be worth pushing back (“are you sure there's no other way?”), talking to experts, doing some external research, or asking it in a fresh session to try and get a broader perspective.

Some weird things happened with the UI in this clip (I think I misunderstood what file was being presented either due to scroll or the layered combination of terminal stuff I have running to record) so there are some blips.

The relevant part is at the end – I noticed that Claude had duplicated the receipt types from the server to the client code. While it may at some point make sense for them to differ, it might never make sense and it certainly is a blatant duplication now. I was confused at first and thought they may have actually differed on nullability, but they were effectively identical. This kind of housekeeping is important – the more duplication the more chance there is for drift, the more context is taken up with refactors or field additions, etc. This is a place where a principle that applies to human understanding of code matters for the agent as well!

One thing that was nice in the ensuing series of edits and builds (clipped out

because there's not much to see in the recording, just lots of flashing diffs

and error messages and confirmations) is that Claude figured out the

import/build details of sharing the types.ts file across the server and

client. Those details were almost certainly going to be boring and frustrating

and derail my design and coding thought processes, and instead I stayed pretty

focused.

With that refactoring tip Claude went ahead and implemented and then I let it start up some “Vite” servers and test them. I realized I had no idea what was going on and asked for a bit more clarification and some help with the logistics of running and testing myself:

At this point I run npm run dev myself and visit the app in a browser.

I noticed a few things, like no ability to delete items and some bad scroll behavior. At this point, we are into a test-refine-design workflow on the user experience. We can apply the same kinds of thinking to adding new features, checking them against a solid type-based design, getting feedback, and so on.

Outline

Mixed-media APIs: passing images and PDFs alongside text[done]Working with an agent: pushing back on defaults, keeping project context, and building the system at a pace you can internalize[done]Types as design: using types to structure abstractions and communication with an agent[done]- Putting the user in the loop: corrections and categories feeding back into extraction

Putting the User in the Loop

So far, the categories are just a hardcoded string that Claude picked for us. Let's add the ability for users to change them, and then have them become a part of the prompt going forward.

Q: Pick some of the 1-6 options Claude gave us and come up with a response – a followup question, a refinement, or a disagreement. Don't agree with all 6. Any overall design reactions.

These are interesting! This is a moment where I feel like I'm actually co-designing. Typically the design space of user interfaces is pretty wide open, and it's nice to think through a number of ideas related to how this is going to work. Items 3, 4, and 5 are interesting because they are the backend data consistency issues that are directly a consequence of the user interface I proposed.

I don't have any major objections, this seems like a right way to go (at this point there's almost certainly no the right way to go). I proceed by giving pretty minor point-by-point feedback, and have some new ideas along the way:

I have a moment of doubt – shouldn't we give Categories an identity and not have these strings all around? For better or worse I'm mollified by Claude's confidence that it will be an easy fix later.

But I also have the idea that we can lean on the LLM to generate the initial set of categories. At least for what I want to build (and learn about) this is interesting because we impose basically no app structure on the categories and let them be determined solely by the model and the user working together through the interface we give them.

In the next clip I just let Claude cook for a bit. I'm scanning the code step-by-step, and thanks to the Claude.md I set up, I'm getting fairly step by step code. I don't have a lot of comments for this code; it does more or less what I expect. I don't plan to find any new major abstractions here.

There's definitely some work that can be done in the future around the

dynamic prompt generation. It's still very string-template-y. But what Claude

does for now with putting in the helpers learnCategory and

buildSystemPrompt(knownCategories) is about what I'd try at first, and does

abstract out where the categories are. I do have some concern that the type

Category was changed to the type string in a ReceiptItem, which is a sort

of loss. There may be some type design we can do to make Category mean

“string that is one from this list”, but given that we want to let the LLM

generate some of these, a static guarantee becomes very tricky.

If I really wanted to experiment, I may try to parameterize over some kind of

category-collection strategy to make it easier to experiment (just unioning

over existing ReceiptItem categories; in the future including other users'

suggestions to seed the system, having different seed sets depending on what

kind of receipt it is). That feels like premature abstraction, and I'm super

interested in getting to the user interface, because I want to understand the

look and feel. So I enjoy the wonders of code creation getting me there:

Now I want to get some UI code going. I redescribe the combo box, but the whole idea is in context so it's just the reasonable next step. More payoff from our good design discussion earlier; the space of reasonable completions of code given all the guidance we've given is pretty narrow here!

I have more or less no comments on this code at first. I noticed that there was

an opportunity for a helper with the repeated configuration of the fetching

code (it keeps repeating application/json and some other config parameters).

That could be cleaned up. I just made a mental note; I probably should have

added it to the real TODOs so I don't forget that there's a refactoring

opportunity. However, focus matters, too, and I want to test a UI. Here's how

my first test looked: